- Mathtrader7 renko ea

- Carvewright com bench

- Feng xing shang hair hotel

- Kinsey scale test

- Regular expression not string

- Use vlookup in excel 2016

- Fatal frame 2 faq

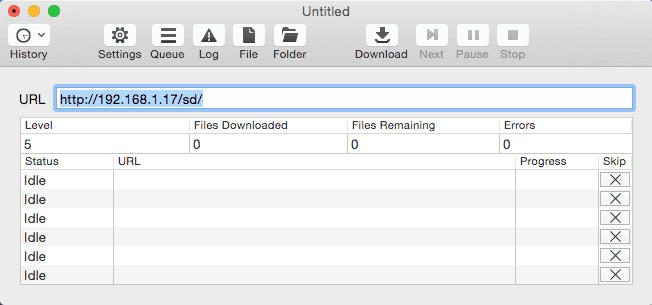

- How to use site sitesucker

- Google chrome not responding alert

- Songs from let it shine

- Beacon morris garage heater installation

- Chand chupa badal mein nivedita photos

- Phantom of the opera iron maiden guitar tabs

- Watch yuva hindi movie online

- Ashton kutchers digital defender

- Tecdoc 2017

- #How to use site sitesucker for mac

- #How to use site sitesucker install

- #How to use site sitesucker update

- #How to use site sitesucker archive

- #How to use site sitesucker full

Unlike the first two, SiteSucker is a premium app and there is no free trial. The newest instance of the app requires MacOS 10.13 High Sierra, 10.14 Mojave, or higher.

#How to use site sitesucker for mac

SiteSucker works on a similar principle as the previous two options on the list, but it is specifically designed for Mac and iOS devices. When the website has downloaded, look for an ‘index.html’ file and open it with your default browser.

#How to use site sitesucker update

The best feature of this program is that it allows you to re-scan downloaded websites when online to update them with new content. There is both a free and a paid version of the tool.

#How to use site sitesucker archive

You can download multiple websites and the tool will automatically organize them. SiteSucker is a Macintosh application that downloads websites from the Internet and can be used to archive siloed pages to ones own website. It will do so recursively for each new page that it encounters. Like WebCopy, it will scan all the pages of the website and search for links, data, and media.

This program does not have so many options in terms of compatible systems, since we can only use it on iOS and macOS. We will only have to put the corresponding address and continue the process. Once this is done, we can start to download any web page.

#How to use site sitesucker install

HTTrack is an extremely popular tool for downloading websites, partly because it is open-source and available for Linux, Windows, and Android. Later we will have to install it on the computer and start using it.

To open the website, just go to the location of your folder using File Explorer and launch ‘index.html’ with your default browser. This will start the scanning and copying process.Īfter the process is finished, you can see all the results and errors, as well as a ‘Sitemap’ option that shows you the complete structure of the website with all the directories.

#How to use site sitesucker full

SiteSucker will enable you to duplicate the directory structure of a website (including all of its data) to your device for full offline browsing.

And we're trying really hard not to forget.ģ.3v Pin Reset Directions :D / Alt Imgur link Along the way we have sought out like-minded individuals to exchange strategies, war stories, and cautionary tales of failures. Everyone has their reasons for curating the data they have decided to keep (either forever or For A Damn Long Timetm). government or corporate espionage), cultural and familial archivists, internet collapse preppers, and people who do it themselves so they're sure it's done right. Among us are represented the various reasons to keep data - legal requirements, competitive requirements, uncertainty of permanence of cloud services, distaste for transmitting your data externally (e.g.

- Mathtrader7 renko ea

- Carvewright com bench

- Feng xing shang hair hotel

- Kinsey scale test

- Regular expression not string

- Use vlookup in excel 2016

- Fatal frame 2 faq

- How to use site sitesucker

- Google chrome not responding alert

- Songs from let it shine

- Beacon morris garage heater installation

- Chand chupa badal mein nivedita photos

- Phantom of the opera iron maiden guitar tabs

- Watch yuva hindi movie online

- Ashton kutchers digital defender

- Tecdoc 2017